"GEO" is a Racket

Some of comms' biggest agencies invented a fake optimization discipline.

When I started this newsletter, I said I was building an escape tunnel under the walls of the Thought Leadership Industrial Complex. Today we’re tunneling with dynamite, not shovels.

Ever since ChatGPT 3.5 set off an explosion of LLMs and other AI technology, communications agencies have been trying to figure out not just the consequences for their clients, but for their own businesses. Namely: what do we sell now? They needed new offerings to fit the new world.

Enter “generative engine optimization”—GEO—positioned as the successor to search engine optimization (SEO), the roughly 30-year-old practice of reverse-engineering search ranking signals to make an organization appear more prominently in results. GEO claims to do the same thing for LLMs that SEO did for search.

But it can’t. The people selling it should know it can’t. But they’re selling it anyway. And the only things getting optimized are their invoices.

Some critical disclosure before we begin: I was a senior executive at Edelman from 2020 to 2021, and until recently served as a senior advisor to Weber Shandwick. I ended that advisory relationship to avoid any conflicts of interest related to what I write here. My money is where my mouth is.

SEO Has Rules, GEO Has Vibes

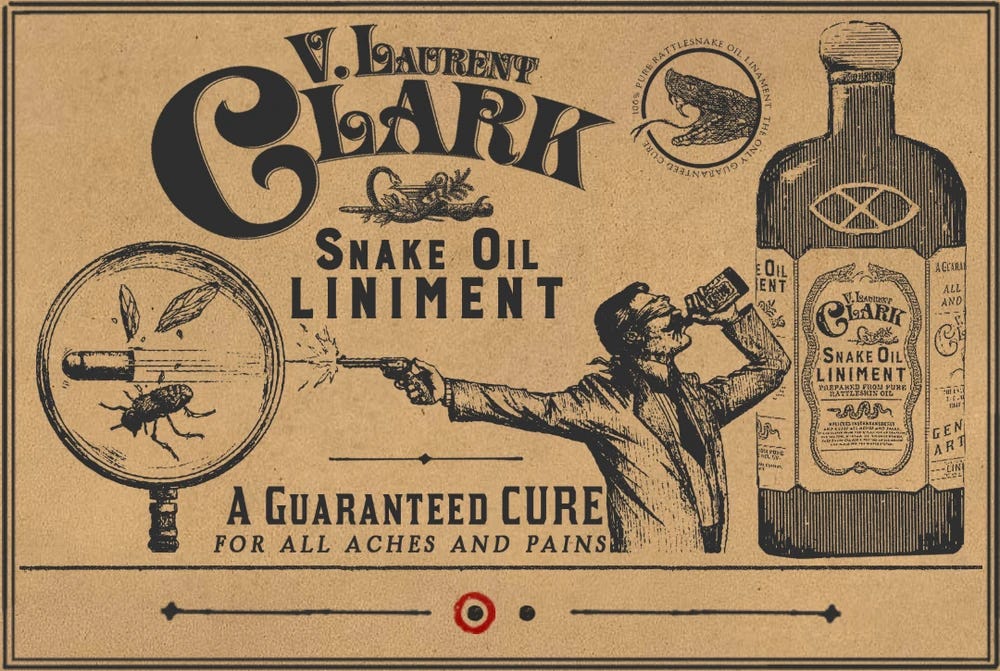

As the internet and search took off in the mid-’90s to the early 2000s, the SEO industry sprang up around it much like towns sprang up around gold discoveries in the Old West.

SEO practitioners and Google have had a fractious relationship. Google et al would make changes, the SEO would grumble and adapt, and on it went. For all its bumpiness, the industry was built on a defensible premise: the system has rules, and we’ve figured out some of them. Google told you how it worked through published details about ranking signals. You could do things like build backlinks, stuff keywords into meta descriptions, and measure what happened. It was, and is, deterministic enough to reverse-engineer and legible enough to optimize.

LLMs don’t have ranking algorithms. They have statistical weights across billions of parameters, producing probabilistic language outputs. Ask an LLM the same question twice, and you’ll get different outputs. None of the frontier model companies can explain why their models cite what they cite. Anthropic’s own interpretability research has described the challenge of understanding how features steer model outputs as peering into a “black box.” None of this is secret.

And when LLMs do reach outside their own training data for answers, they’re not using some novel discovery mechanism. They’re querying traditional search engines: Bing, Brave, Google. The retrieval layer that GEO claims to have invented already exists. It’s called SEO.

So if the companies that built these systems are still publishing papers about trying to understand citation behavior, what exactly is a PR agency optimizing?

GEO’s Origin Story Is Already Crumbling

The entire GEO category traces back to a single academic paper: “GEO: Generative Engine Optimization,” out of Princeton and IIT Delhi. The paper’s headline claim, that “GEO can boost visibility by up to 40%”, has been repeated in every agency pitch deck, every trade article, and every retainer proposal that’s crossed a comms leader’s desk in the past year.

These materials blithely omit some important details.

The paper was submitted to ICLR 2024, one of AI’s most prestigious machine learning conferences, and then withdrawn. It was later published at KDD, a data mining conference—not a machine learning or NLP venue where the claims about LLM behavior would face the toughest scrutiny—but by then the “up to 40%” number had already been circulating for nine months via the arXiv preprint.

Plenty of time for the marketing machine to launder it into accepted fact.

The study mimicked a Bing-like workflow in which the LLM queries traditional search results first, meaning GEO depends on SEO. If you’re not already ranking in traditional search, you’re not getting cited by the generative engine. A detailed critical review found that all “optimizations” in the study were performed by AI, the keyword stuffing prompt (one of the tested “GEO strategies”) was omitted from the paper body and only discoverable in the source code, and the methodology has significant issues nobody in the industry pressure-tested because... the Princeton connection, I guess?

More recent academic work hasn’t been kinder. A subsequent study building on the Princeton framework notes that “current GEO practices are largely heuristic, relying on rules of thumb such as the use of quotations, authoritative tone, or FAQ-style structures” and that “existing metrics like the impression score do not translate directly to economic impact.”

An unreviewed study with significant methodological issues became the foundational citation for an entire service category, its headline number laundered through trade press and agency marketing until it hardened into accepted fact. It is not.

Three Studies, Three More Mortal Wounds

Let’s assume GEO were real, and there was a knowable, optimizable relationship between specific interventions and LLM citation behavior. You’d expect three things to be true: LLMs would cite sources reliably, the platforms would behave similarly enough to optimize for, and the interventions would be distinct from existing comms practice. In reality, none of those things is true.

LLMs hallucinate their own citations. A Nature Communications study published in April 2025, analyzing 58,000 statement-source pairs, found that 50–90% of LLM responses are not fully supported by the sources they cite. Even GPT-4o with web search — the best performer in the study — left approximately 30% of individual statements unsupported. Across the broader research literature, models fabricate 18–69% of their citations depending on the model and the domain. When researchers explicitly prompted ChatGPT to “only cite sources you can verify,” fabrication dropped from 47% to 41%. You are paying agencies to optimize your visibility in systems that cannot currently make reliable outside citations.

The platforms don’t agree on anything. Only 11% of domains get cited by both ChatGPT and Perplexity. ChatGPT without web browsing draws on parametric knowledge, e.g., whatever was in its training data. Perplexity runs real-time search against 200+ billion URLs. Google AI Overviews correlate with traditional SERP rankings. Claude uses Brave Search.

These aren’t variations on a theme. They’re fundamentally different architectures with different retrieval mechanisms, and the frontier model labs change those mechanisms without notice or documentation. Anyone selling “GEO strategy” is selling optimization for four different systems that work four different ways, and they can’t tell you how any of them decide what to cite.

The industry’s own data proves the service is redundant. This is the one that should end the conversation. Muck Rack’s “What Is AI Reading?“ analyzed over a million links cited by AI tools. The headline finding: 89% of AI citations come from earned media (since declining to 82% as OpenAI reduced Wikipedia reliance). Every GEO vendor cites this stat as proof that the category matters.

If 89% of AI citations come from earned media, then the driver of AI visibility is the same thing agencies already sell and have always sold. Muck Rack also found only 2% overlap between the journalists most pitched by brands and those most cited by AI engines. Their interpretation: “an adjustment to pitching practices could have a major impact on a brand’s GEO.” Translation: pitch better journalists. That’s just media relations.

These GEO merchants didn’t discover a new channel. They discovered that good comms works everywhere, including in LLM outputs, and slapped a new acronym on it.

Let’s Name Names

Let’s walk through how some of the comms industry’s most credible institutions are lending legitimacy to a service category that can’t demonstrate a causal mechanism and is undercut by research published by its own practitioners.

Edelman, the world’s largest independent PR firm, launched GEOsight in May 2025. Brian Buchwald, Edelman’s Global Chair of AI and Product, told the industry: “You can’t buy your way to the top of an AI-generated answer.” Finally, someone in GEO is right about something! And ironically, it makes a tacit argument against the existence of controllable optimization levers. But nobody seems to have noticed.

The architect of GEOsight described GEO in that same press release as requiring "a fundamentally different approach — one rooted in authority, earned media, and trust signals." Authority. Earned media. Trust signals. How is that not a description of what Edelman has sold for seventy-three years?

The actual GEOsight deliverables: baseline visibility audits, “earned-first optimization strategy” (read: strategic media relations), content structuring, and performance reporting. Strip the branded language, and you are looking at an Edelman retainer circa 2019 with a dashboard bolted on.

Four months later, Zeno Group launched GEOfluent. The product promises to “track brand visibility... identify which media outlets influence each model... uncover the keywords, prompts, and narratives shaping brand mentions... benchmark against competitors... connect AI answers back to owned and earned media.” Thomas Bunn, Zeno’s Global Chief Client Impact Officer, said GEOfluent “gives clients real-time insights and strategies to influence how they show up.”

What strategies, you ask? More earned media.

Here’s the funniest part: Zeno Group is a subsidiary of DJE Holdings. Two separately branded GEO products, launched within months of each other, sold to different client lists, delivering the same fundamental offering dressed in different clothes. It’s Ford marketing the Pinto with a new name and a Mercury badge.

INK Communications told O’Dwyer’s readers they’d identified “several controllable levers that B2B tech brands can use to influence how they show up in AI-generated responses to lower-funnel queries.” Controllable levers. For a system where the model companies themselves can’t explain citation behavior. Then they listed the levers: optimize your homepage, your product pages, and your metadata.

That’s technical SEO. They repackaged it, published it in a trade outlet, and called it a GEO-specific insight.

5WPR has built a full standalone GEO optimization service page, positioning it alongside their traditional PR offerings as a distinct capability. The deliverables are indistinguishable from content strategy and media relations.

Then there’s the gold rush tier. Alongside the agencies that anyone in this audience has heard of, an entire ecosystem of SEO shops has overnight renamed their services. I won’t link to any of them because I don’t want to give them any added SEO juice.

The category has generated its own self-referential content economy with retainers reportedly running as high as $50,000 per month, before anyone has demonstrated that the underlying service works. Go look for a public case study anywhere offering real proof. You won’t find one.

There’s nothing wrong with an agency telling clients that earned media matters more than ever in the age of AI-driven discovery. It’s true, and it’s a conversation every comms leader should be having. But there’s a line that gets crossed when an agency packages that observation as a distinct, optimizable discipline with proprietary methodology and its own retainer; when “earned media matters” becomes “we know the levers to pull to improve your position in LLM outputs, and they’re distinct from what you’re doing now.” One is honest counsel. The other is selling certainty that doesn’t exist.

A Moment of Clarity

Don’t mistake what I’m saying above as an indictment of the actual communications work of these agencies. Edelman, Zeno, INK, and 5WPR all employ real professionals who work hard to get real results for their clients. I know many people in these shops, and they’re passionate, smart, and criminally underpaid. The Edelman Trust Barometer is the single most important longitudinal research study in the profession. And as we’ve laid out above, these agencies were already doing the things that translate to success in this new era!

This is also not an argument that LLMs haven’t had an impact on information discovery, ascertainable or not, or that they’re inherently bad. AI is changing how information gets discovered. That’s real. You’d be hard-pressed to paint me as an AI naysayer. Wanting to monitor how your brand appears across LLM outputs isn’t a crazy desire. But it’s the difference between buying a thermometer and buying something claiming to be a thermostat.

Since we’re having a moment of clarity, here’s what a purely truthful version of this service would sound like:

“We don’t know why LLMs cite what they cite. Nobody does, not the companies that built them, not the researchers who study them, not us. What we can tell you is that good communications fundamentals like authoritative earned media, structured content, and consistent brand presence across trusted platforms seem to correlate with better AI visibility. We can monitor how you’re showing up and help you do more of what appears to work. But we can’t guarantee outcomes, we can’t explain the mechanism, and the platforms change without notice.”

That’s honesty. It’s also just communications consulting with a monitoring layer. You don’t need a new acronym or a new line item. You need better comms and a dashboard.

The reason nobody says this out loud is that “keep doing good comms” doesn’t beget a new high-margin revenue line. “GEO” does. An audit with a branded name, a dashboard with a proprietary feel, monthly retainers for monitoring a system with no proven optimization surface—that’s money in the bank. The entire category exists because agencies need new things to sell, not because clients have a new problem to solve.

The challenge

If you’re an agency selling GEO as a distinct discipline, I have five questions. I’ll keep them simple.

What is the causal mechanism between your recommended interventions and AI citation behavior? Not correlation. Causation.

Can you explain why ChatGPT and Perplexity cite different sources for the same query, and how your strategy accounts for architectures that diverge in this fundamental way?

What happens to your optimization strategy when a model updates its retrieval system, something that happens regularly, without notice?

What percentage of your GEO deliverables are things you would have recommended under your existing communications retainer? Be honest.

Can you name a single intervention unique to GEO, something you would never recommend for general communications effectiveness, that demonstrably improves AI citation rates?

If GEO is real, answer those. Publicly. If it’s not, stop rebadging the same thing you’re already doing and charging for it twice.

Yep, for now the best we can do for GEO is just...the usual comms tactics

Whoa. I'm not into "takedown pieces," per se, but this was so insightful and brimming with integrity. Thanks for the education and the call to truthiness. To Question 5, from what I've read, it seems "get on Reddit" would be a GEO-specific intervention many are considering now vs pre LLMs. But to your overarching point, that was a smart play for many brands pre-LLMs, anyway.